Total Cost of Ownership

Planning for the Real Cost of AI in Engineering

Most ROI calculations for AI tooling share the same quiet flaw. They project productivity gains from research conducted on developers who use AI substantively every day, then price the investment at the tier where developers use it occasionally. The math looks compelling, but it isn’t realistic.

Three Tiers

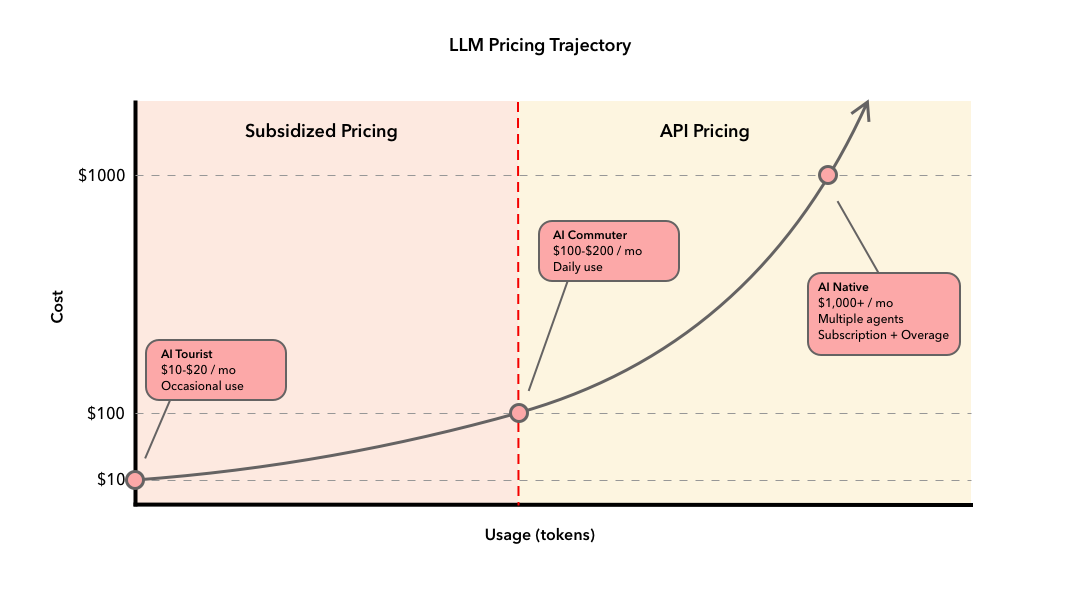

The AI coding tool market has sorted itself into three adoption tiers, each with different usage patterns and a different relationship to the productivity research teams cite when making the investment case.

AI Tourists spend $10-20 per developer per month. Tourists use AI the way most of us use Stack Overflow: occasionally, when stuck, for quick lookups or autocomplete on familiar patterns. AI hasn’t changed how they work.

AI Commuters spend $100-200 per developer per month. Commuters use AI across the full SDLC: planning, generation, refactoring, debugging, code review. This is what “we use AI for development” actually means when organizations report productivity gains.

AI Natives spend $1,000 or more per developer per month. This tier runs full agentic workflows: multiple parallel agents executing multi-file tasks autonomously, agents that draft specs, generate code, run tests, and open pull requests without human prompting at each step.

The productivity research that justifies AI investment was conducted on substantive users. Abi Noda, whose firm DX has tracked AI adoption across hundreds of engineering organizations, puts it plainly: developers save about three hours per week when they’re actually using the tools. Developers in the heaviest usage quartile show measurable gains. Light users show minimal impact. A developer doing real agentic work throughout the day is a Commuter. That’s the realistic baseline for budgeting.

Why Commuter Is the Realistic Baseline

The Commuter range isn’t a power-user premium. It’s what a developer who uses agentic coding tools throughout a normal workday actually consumes.

Here’s why. Agentic coding tools don’t send a single prompt and wait. Each interaction is a multi-turn conversation: the system prompt, the full conversation history, the contents of files pulled into context, and tool-use tokens from file reads, searches, and test runs. A straightforward “refactor this function” request might load tens of thousands of tokens before the model generates a single line. A session working across multiple files on a mid-size codebase consumes more. As the day progresses, each follow-up request carries the accumulated history of everything before it. Context compounds.

The result is that a developer using agentic tools regularly throughout the day lands in the $100-200 per developer per month range. Anthropic publishes an average daily cost figure consistent with this range for API users. That average reflects normal development work: planning a feature, iterating on implementation, debugging, reviewing diffs. The math works out roughly the same whether you’re on a flat-rate subscription that covers that usage or paying API rates directly.

For organizations deploying at scale, the structure matters. Team plans bundle access and usage into a per-seat fee. Enterprise plans separate the two: the seat fee covers access, and token consumption is metered on top at API rates. Either way, the underlying consumption is the same. A developer doing substantive agentic work costs roughly what the Commuter tier costs, regardless of how the invoice is structured.

What You’re Actually Paying For

Subscription pricing obscures a meaningful gap between what you pay and what you consume. Flat-rate plans are priced well below actual API cost at heavy usage levels. That gap represents a vendor subsidy designed to build habit and market share at below-cost pricing.

Cursor’s June 2025 switch from request-based pricing to a credit model tied to actual API consumption was an early signal of where this is heading. The developer backlash was sharp enough that Cursor apologized and issued refunds. That reaction tells you something: developers had become dependent on flat-rate pricing, and the dependency became visible the moment it was threatened.

Commuter adoption also requires a stack, not a single tool. An IDE assistant, a code review tool, a chat model. At honest per-seat pricing, that’s $100-200 per developer per month in subscriptions alone before any overhead. A more honest planning assumption runs the ROI at 2-3x current subscription pricing. If the investment still works, proceed with confidence. If it only pencils at today’s subsidized rates, that’s a pricing dependency worth naming explicitly.

The Hidden Costs

Subscription pricing, even at honest Commuter rates, isn’t the full story. Five cost categories don’t appear on vendor pricing pages.

Rework and quality debt. CodeRabbit’s analysis of 470 pull requests found AI-generated code produces 1.7x more defects than human-written code, with security vulnerabilities appearing twice as often. GitClear’s analysis of 211 million lines of code found code duplication surged eight-fold between 2022 and 2024. A Harness survey found 67% of developers now spend more time debugging AI-generated code than writing it themselves. The tool generating more defects is simultaneously increasing the time required to catch them.

Review bottleneck. Agentic coding drives 98% more pull requests and 154% larger PRs, according to Faros AI’s analysis of 10,000 developers, while review time jumps 91% and organizational delivery metrics stay flat. AI generates a 500-line PR at near-zero marginal cost. A senior engineer still needs two hours to evaluate it. Accelerating code generation without modernizing review doesn’t improve throughput. It moves the bottleneck downstream.

Adoption ramp. Productivity gains require rebuilding the workflow around the tools. CI/CD pipelines need hardening to handle the volume. Teams need to develop and standardize a shared library of agent skills and workflows before individuals work efficiently. Best practices are still maturing, and that instability has a cost. What worked six months ago may already be obsolete.

Governance and compliance. Any serious AI implementation requires governance: access controls, data handling policies, audit logging, and security review processes. For publicly traded companies and large organizations, that bar is higher still. Veracode found that AI introduced security vulnerabilities in 45% of coding tasks, because models optimize for tests passing rather than security properties. The tooling, training, and remediation work to meet that bar doesn’t appear on the vendor pricing page.

Skill erosion. Anthropic’s January 2026 randomized study found developers using AI scored 17% lower on code comprehension than those who coded manually, with debugging showing the largest gap. The capability that makes AI output valuable is the human judgment to evaluate it. A workforce that generates code quickly but can’t debug when AI fails, can’t understand system architecture, and can’t maintain code over the long term is a compounding liability, not a productivity asset.

What Defensible Budgeting Looks Like

Three moves make the calculation honest. First, budget at Commuter costs with 30-40% Year One overhead for the hidden categories. Use cases that still deliver positive ROI at that number are worth pursuing. Use cases that only pencil at Tourist pricing aren’t ready for workflow-level investment.

Second, stress-test the subsidy. Build a second scenario at 2-3x current subscription pricing. A decision that depends on a specific vendor pricing structure should acknowledge that dependency in the risk column, alongside technical risk.

Third, treat engineering depth as a strategic asset. The fundamentals that let developers evaluate AI output are the same capabilities that provide leverage when vendor pricing shifts. Minimum competency floors aren’t about slowing adoption. They preserve the auditing capability that makes AI output usable.

The ROI case for AI tooling works, at honest cost inputs, with realistic productivity estimates, for use cases that match the research conditions. The number is more modest than the vendor pitch and the cost is higher than the pricing page suggests. But there’s genuine value here for teams that measure it correctly.

I’m curious whether your team has done the full TCO version and where it lands. Does the investment hold at Commuter costs with the hidden categories included? That’s the conversation worth having before the budget is set.